Eye tracking is one of the best known tools in what most people call ‘neuromarketing’, a type of market research based on neuroscience.

Anyone who has ever seen a practical example of this method being used will have noticed that, when watching a video or looking at a photograph, people will mainly focus on faces.

For example, both in this Mona Lisa example, borrowed from Neurons Inc, and the other next to it, borrowed from TeaCup Lab, the face stands out more than anything else.

This happens in most cases where eye tracking is applied.

Professor Thomas Zoëga Ramsøy (1), quoting other researchers (2), points out that eye tracking has some limitations when trying to accurately read emotions reflected on the observed person’s face. However, it remains a very useful technique. In fact, we are magnetised by other people’s faces.

Historical discovery

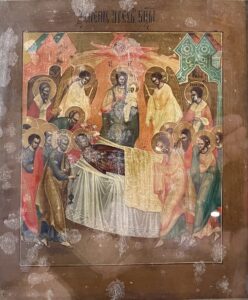

Last summer, when visiting the Poblet Abbey museum at Poblet (Tarragona, Spain), I saw two 17th-century works of art by unknown artists. This is a photo I took of the first one.

Although the information provided by the museum was minimal, I believe this polychrome icon is likely to be an Orthodox Church work depicting the death of the Virgin Mary.

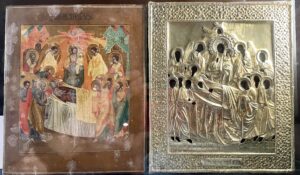

Next to it, there was a second work of art – this one in silver – complementing the first one.

It is not necessary to do a big effort to notice that the work on the right fits onto the one on the left. When superimposed, all you can see from the first one is the faces of its subjects.

So, although they did not have today’s neuroscientific measuring techniques at the time, by the 17th century, the neuromarketing seed had already been planted. And eye tracking seed in particular.

Even then, people knew how we are highly attracted by faces, either because they help us to understand people, or because they are our main ‘speaker’ when interacting with others.

And they framed them in silver!

Why are faces so important?

Due to their ability to emit and capture signals (technically called sense data or stimuli), faces are crucial in every personal interaction process.

A face enables us to notice what our interlocutor is feeling.

For example, when a person smiles to someone else, they send a happiness signal. Once that emotion is detected, the person receiving it will smile back, as we can see in this cute video.

(3)

So, looking at other people’s faces allows us to feel something as important as empathy, made possible by our mirror neurons.

When a face is hidden, like behind a mask, those looking at it lack any clues about the person wearing it.

With no individual facial expressions, these five people generate apprehension.

And that is likely to hinder any chance of interacting with any of them.

Without any sense data, when faced by a hidden face, our brain is disconcerted in its typical neuro-journey, a process that starts with the detection of an outside stimulus, the emotional activation, an understanding of what is happening out there, and ends with the person’s final behaviour.

For example, during a robbery, if the robber is wearing a mask, they will not give us any clues of their possible nervousness, their next likely behaviour, their attitudes, etc. So, they will have an advantage over those they are robbing.

So, our faces allow us to be, and be seen as, members of a society. And, ultimately, individuals.

Four practical implications

1.

Video-game addiction is increasing day by day, and players are in a locus or mind place where they interact, for very long hours, with fictional characters. Furthermore, we are beginning to see a technological base that will also allow people to live in metaverses, for many hours and with very different purposes.

People who spend a long time frequently getting some kind of gratification (examples of gratification: a simple ‘like’, or an achievement resulting from a personal skill) are highly likely to get addicted to that gratification, due to a dopamine overdose.

Putting aside other psychological or physical side effects, I believe we can state that:

Those addicted to video games and to metaverses will be facing a serious problem: their decreased ability to read other people’s faces.

That is, they will be less empathetic.

And empathy is crucial for living in society, for negotiating, for being part of a team, for falling in love, and so on.

2.

There is no doubt that automation will bring the end of some tasks and professions. This article is being written by a person, but I have already read some – such as the one published in the Spanish newspaper La Vanguardia by Josep M. Ganyet (on December 13, 2021) – that were drafted by artificial intelligence (4).

Some professions, like yours or mine, will be cannibalised, at least partially, by AI.

What looks clear is that robots will find it a lot harder to steal your job if you are able to read faces, interpreting them in their context.

3.

Do not hesitate to look kindly at your colleagues’, providers’, customers’, bosses’ and shareholders’ faces. And do not hesitate either to make your own face more expressive, including abilities like voice modulation.

If you add a genuine smile, oxytocin will even do its job and you will achieve a higher personal connection.

People will pay more attention to you, even your boss!

4.

Finally, if you have a face-related business, you should know that you own a treasure.

The face – as the body’s main tool for expression – will want to be properly looked after. Facial cosmetics, hairdressing, orthodontics, expressive eyewear, etc. will be increasingly relevant.

There is also another activity requiring an intensive use of your face: people who are doing group presentations.

Are you one of them?

© Author: Lluis Martinez-Ribes, co-founder of m+f=! and ESADE Visiting Prof. With the support of Marina Font, Rosa Franch and Carla Vallès. BCN, January 2022.

_______________________________

BIBLIOGRAPHY AND REFERENCES

- Zoëga Ramsøy, T. (January 17th , 2022). Can we reliably use facial expressions to track and understand customers? Linkedin post. https://www.linkedin.com/feed/update/urn:li:activity:6888806129866997760/

- Barrett, L. F., Adolphs, R., Marsella, S., Martinez, A. M., & Pollak, S. D. (2019). Emotional expressions reconsidered: Challenges to inferring emotion from human facial movements. Psychological Science in the Public Interest, 20, 1–68. doi:10.1177/1529100619832930 https://journals.sagepub.com/doi/10.1177/1529100619832930

- Inserted video. Contributing Entropy. (June 14th, 2020). Baby smiles at mom | Baby turns mom face and adorably smiles. YouTube. www.youtube.com/watch?v=LcRfOu3oRSA

- Article drafted by artificial intelligence: Ganyet, J.M. (December 13th, 2021). AI and Journalism. La Vanguardia. https://www.lavanguardia.com/economia/20211213/7924460/ia-periodismo.html